Here are the iOS frameworks Apple’s Catalyst brings to Mac

Catalyst is here, Project Marzipan never existed and we may soon see tens of thousands of iPad apps ported easily across to the Mac.

What is Catalyst?

Catalyst is Apple’s well thought through system that lets developers easily port their iPad apps across to the Mac. It consists of new tools within Xcode (essentially you just need to tick a box) and built-in Mac support for a huge number of APIs that will let your iOS apps run natively. I thought some readers may be interested to see a list of all of these.

AppKit for the Mac

AppKit is the framework that enables the Mac, while UIKit is what you use for iOS.

However, with over a million iPad apps Apple thinks could run on the Mac, the company is adding dozens of iOS frameworks and libraries to macOS in order to make it much easier to create an iPad app and deploy it to a Mac, starting this fall.

So, which UIKit elements will be supported on Macs? Nearly all of them – the list includes almost all Apple’s iOS APIs, bar the mobile-only ones. Here are all the UIKit API’s and libraries Apple will pump into AppKit to help kick-start iPad apps reaching Macs:

IdentityLookup

Introduced in 2017, Identity Lookup is a framework that allows developers to help users identify unwanted messages.

VideoSubscriberAccount

This is the software that lets you sign in just once on an iOS device in order to access your choice of video streaming services from cable/TV providers on the device.

Natural Language

An iOS 12 framework that empowers apps to understand natural language text. It enables things like tokenization and name, language & place detection in an app.

iAd

You can build apps that display iAds in a defined area of their user interface with this, which can be a nice little earner to developers.

AudioUnit

Add sophisticated audio manipulation and processing capabilities to your app

UserNotifications

Push user-facing notifications to the user’s device from a server , or generate them locally from your app.

ContactsUI

Display information about users’ contacts in a graphical interface

OpenAL

OpenAL renders 3D sound quickly

SpriteKit

Metal-leveraging framework for drawing shapes, particles, text, images, and video in two dimensions.

CoreData

Core Data is a framework that you use to manage the model layer objects in your application.

Accelerate

Libraries that provide high-performance, energy-efficient computation on the CPU by leveraging its vector-processing capability.

Neural text to speech means Siri voice is more natural and has a better handling of cadence – it is very much more natural sounding the example proved. Though voice doesn’t photograph well 🙂 #wwdc pic.twitter.com/UatGTgCjzy

— jonny evans (@jonnyevans_cw) June 3, 2019

EventKit

Access to calendar and reminders data so you can create, retrieve, and edit calendar items in your app

CoreFoundation

Core Foundation is a library framework that provides fundamental software services useful to application services, application environments, and to applications themselves.

IOKit

Lets you connect external devices to apps.

Security

A set of frameworks that let you establish user identity, secure data and ensure code validity.

NetworkExtension

Allows apps to customize and extend the core networking features of iOS and OS X.

VideoToolbox

This provides video compression and decompression, and conversion between raster image formatsby using hardware encoders on systems.

EventKitUI

This is the code that providesan interface for viewing, selecting, and editing calendar events and reminders.

CoreText

CoreText provides what you need to create text layouts and handle fonts in documents.

PDFKit

Want to look at a PDF? Want to let people interact with a PDF? That’s PDFKit.

MediaAccessibility

An important API that coordinates the presentation of closed-captioned data with the relevant media files.

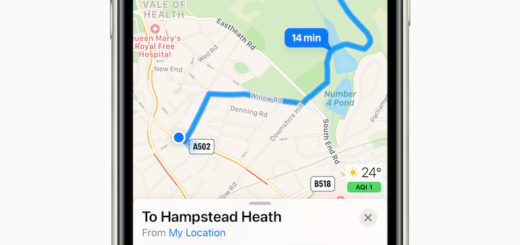

MapKit

“Display map or satellite imagery from your app’s interface, call out points of interest, and determine placemark information for map coordinates,” Apple states.

Developers get these built in to accelerate app dev for apps across apples platforms #wwdc pic.twitter.com/Uvfw76nnJ8

— jonny evans (@jonnyevans_cw) June 3, 2019

ModelIO

What’s the model of this device?

SafariServices

Enable web views and services in an app.

CoreAudioKit

This contains APIs developers can use in order to create user interfaces for audio units.

PushKit

This sends specific types of notifications (Apple notes VoIP invites, watchOS complications and file provider notifications) to your app for processing.

AdSupport

You need this to serve up ads in an app, though you need to honor any opt-out instructions sensible users may have made.

Vision

Face and face landmark detection, text detection, barcode recognition, image registration, and general feature tracking

MediaPlayer

Part of MusicKit, this controls playback of a user’s media within an app.

FileProvider

Use this to let other apps access documents and directories stored and managed by your app.

QuickLook

Display thumbnail images and full-size previews of documents

Contacts

This is kind of already available on both Macs and iOS, and enables apps to access a person’s contact information.

GamePlayKit

I’m guessing this may well end up being a widely used API. It provides tools and technologies for building games.

Over a week and still pondering #wwdc pic.twitter.com/8Gii3e2dfR

— jonny evans (@jonnyevans_cw) June 11, 2019

Accounts

This provides access to user accounts stored in the Accounts database. Apple notes its use with Twitter here, but I imagine it will also be pretty handy to those using Sign on with Apple.

StoreKit

Support in-app purchases and interactions with the App Store.

ReplayKit

Record or stream video from the screen, and audio from the app and microphone

ClassKit

Educational apps may use ClassKit to assign activities and view student progress within apps.

GameKit

Add leaderboards, achievements, matchmaking, challenges, and other interactive features to games.

LocalAuthentication

Authenticate users with TouchID or FaceID, or using their passcode.

BusinessChat

Enterprise developers can use this to build access to Business Chat communications within their apps.

WebKit

So important, I linked to its page.

MediaToolbox

Interfaces for audio content playback

CFNetwork

Access network services and handle changes in network configurations

CoreLocation

Where am I? That’s what CoreLocation figures out by finding the geographic location and orientation of a device.

DeviceCheck

“You might use this data to identify devices that have already taken advantage of a promotional offer that you provide, or to flag a device that you’ve determined to be fraudulent,” Apple states.

JavaScriptCore

Evaluate JavaScript programs from within an app, and support JavaScript scripting of your app.

FileProviderUI

Add contextual actions.

ImageIO

This important code lets apps write and read most image formats.

ExternalAccessory

You’ll need this to speak to other devices connected using the Lightning connector or Bluetooth.

Speech

Speech recognition inside apps.

AVKit

Use this to develop audio and movie software.

CoreServices

Access and manage key operating system services, such as launch and identity services.

PassKit

Request and process Apple Pay payments and distribute Wallet passes.

Photos

Search for and display photos.

CloudKit

Put app and user data in iCloud.

CoreSpotlight

Want your app to be searchable? You’ll use CoreSpotlight.

AudioToolbox

Record audio, play it. Convert it between formats and more with AudioToolbox.

GameController

Hint is in the name.

CoreImage

Build apps that will process still and video images with Apple’s built in CoreImage tools.

SceneKit

You’ll use this to create 3D experiences.

MultipeerConnectivity

Want devices using your app to find each other? That’s what this does.

CoreGraphics

I owe so much to CoreGraphics. It handles 2D rendering, antialiased rendering, gradients, images, color management, PDF documents and loads more.

IOSurface

Use this to share bitmaps/objects between apps. More.

CoreAudio

I owe a lot to CoreAudio too. It generated a revolution in music production and is the digital audio infrastructure of iOS and OS X.

CoreVideo

CoreVideo is what makes iMovie work, pretty much.

CoreBluetooth

You’ll need this to make apps that speak to Bluetooth devices.

SystemConfiguration

What on earth do those error codes mean?

CoreMedia

Use this to efficiently process media samples and manage queues of media data

Network

Send and receive data.

Authentication Services

Use this so users don’t have to jump through hoops to authenticate.

MetalKit

Build Metal apps fast with this — it’s essential and you’ll need this info.

AVFoundation

Work with time-based audiovisual media on iOS, macOS, watchOS and tvOS

QuartzCore

A graphics rendering and animation infrastructure to animate visual elements of apps, aka CoreAnimation.

MobileCoreServices

To create data that can be exchanged between your app and other apps.

Metal

Want to get the full power of the GPU? Use Metal.

NB: This list may be erroneous, poorly explained or incomplete. Some of these API’s were already available on both AppKit and UIKit, but I guess this has now become official. This list comes from a State of the Union WWDC video that is publicly available and shows up briefly at c.33.21.

Please follow me on Twitter, or join me in the AppleHolic’s bar & grill and Apple Discussions groups on MeWe.

Dear reader, this is just to let you know that as an Amazon Associate I earn from qualifying purchases.