Biostar 2 security failure shows why Apple’s Face ID is biometrics done better

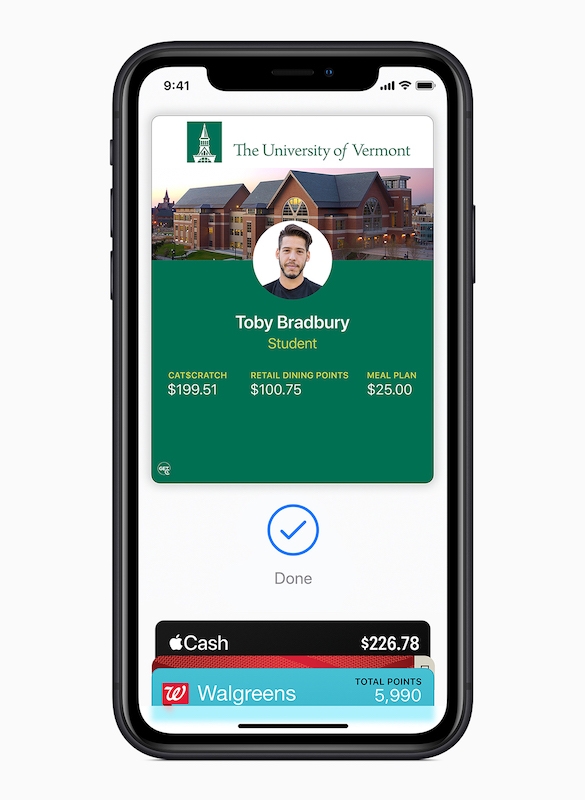

Student uses iPhone to enter campus

Security researchers recently found fingerprints and heaps of other data belonging to tens of millions of people was stored unprotected online by Suprema, a company used by big business and defence contractors.

And the fact that data existed online at all for some of the reasons it does is proof positive why Apple’s Face ID is the only way biometric data should ever be stored.

Companies that store personal data insecurely should be punished

There are tons of potential risks in the recently revealed security flaw, not least that people’s fingerprints were made available in unencrypted and unsecure form.

Not only this, but it was possible to change data held in the archive, making it potentially possible for criminals to change a person’s records to access buildings or accounts.

Apart from seeming to be a painfully egregious error on the company’s part, this problem also represents an error of judgement on the part of all that company’s clients, who must surely have engaged in due process to ensure data confidentiality.

Student uses iPhone as room key

Apple is private by design

Apple’s systems don’t work the same way. Face ID stores images of your face only on the device in a Secure Enclave.

This works the same with Touch ID, and while you need your Apple ID password to enable either security protection, there is no need for Apple – or anyone else – to keep records about you.

No one knows your fingerprint but you and your device.

Any personal data Apple does use is encrypted, protected, and kept highly secure. (Though you should always use an alphanumeric Apple ID passcode).

Biometric ID should be private too

This is why Apple’s biometrics are a truly viable option for companies, colleges and other entities seeking highly secure biometric authorization systems.

It seems pretty clear Apple is building up to make this happen.

Not does its whole Wallet/Apple Pay business planseek to replace the contents of your physical wallet (including the credit card), but it also seeks to replace keys and other forms of identity.

One day your Apple Watch will be your passport…

The company isn’t (quite) going full steam ahead on this, at least, not yet. Some aspects of its plan are in the field, others are being discussed, and others are currently being tested in the real world.

Take door and building entry systems. Apple has been working with colleges and universitiesacross the U.S. to develop and support iPhone/Apple Watch-based biometric student ID systems.

A student ID card now lives in your iPhone

What happens at college stays at college…

These are in place at a huge number of places of education in the U.S. – a dozen more joined the scheme in August– and let students access premises with nothing more than a flick of their iPhone or Apple Watch.

They can also purchase items on campus, access gym facilities and many more places and services student services provide.

“We’re happy to add to the growing number of schools that are making getting around campus easier than ever with iPhone and Apple Watch,” said Jennifer Bailey, Apple’s vice president of Internet Services.

“We know students love this feature. Our university partners tell us that since launch, students across the country have purchased 1.25 million meals and opened more than 4 million doors across campuses by just tapping their iPhone and Apple Watch.”

Apple supports third party access solutions from the likes of CBORD, Allegion and HID for this service, but the one key element here is that –unless for some reason third-party integrators insist on it – there really is no reason anyone should actually need to store copies of your personal biometric data as all of that side of the equation is handled by Apple’s systems.

And this is why it is important as consumer and enterprise users navigate the rapidly increasing complexity of our digitally connected world in crisis that they don’t allow themselves to sleepwalk into dystopia by neglecting to ensure their product and service choices are private by design.

Nothing else will do.

Please follow me on Twitter, or join me in the AppleHolic’s bar & grill and Apple Discussions groups on MeWe.

Dear reader, this is just to let you know that as an Amazon Associate I earn from qualifying purchases.